- Blog

- What do i do to upgrade to onenote 2016

- How do i create a hyperlink in word

- Jeepers creepers 3 free online stream

- Microsoft outlook mac review

- Serial key for nfs undercover

- Hp deskjet 1000 driver install

- Superheroes unlimited mod spiderman

- Buy eset for mac

- Liteon dvd driver windows 10

- Mosler safe models throughout the years

- Azure bi tools

- Windows 10 usb xhci compliant host controller code 10

- Airserver activation code generator

- How to make multiple word documents open in one window

- Best skype video call recorder mac

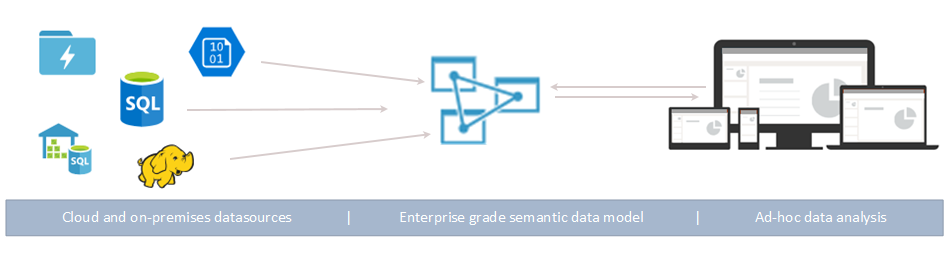

For example, billing information is available only for authorized users. The roles are contributor, owner, and administrator. The tool allows creating roles and assigns specific permissions to them. Azure Data Factory offers a global cloud presence, with data movement available in over 25 countries and protected by Azure security infrastructure. In data management and control, accessibility is critical. Databricks also allows exploring the unstructured data (e.g., sounds, images) through ML models. This is a service used to process and transform large amounts of data as well as store them. What’s more, although it is an Azure product, it can be used with any cloud (AWS or GCP).Īs a result, Data Factory can be used with most databases, any cloud, and it is compatible with a wide range of supplementary tools, such as Databricks.

#Azure bi tools drivers

The tool manages all the drivers required to integrate with Oracle, MySQL, SQL Server, or other data stores. The main benefits of using a Data Factory are the following: On the vast majority of cloud projects, you need to move data across different networks (on-premise to the cloud) and services (across Blob storages, data lakes, data warehouses, etc.)ĭata Factory is a tool that orchestrates data, thus makes it easier to structure it and get insights. So, Azure Data Factory is the most efficient data orchestrator available. You need to know python to create any pipes. Although Apache Airflow is a similar service, where you can also arrange pipelines, it is more difficult to use, because it is not code-free. It is fair to say that you need a Data Factory on almost every cloud project. Reaching continuous integration and delivery (CI/CD).Executing a pipeline from Azure logic apps.Creating visual data transformation logics.Developing code-free ETL processes in the cloud.The platform is used for serverless data migration and transformation activities including: For instance, you can get a Power BI report that will further help make informed business decisions.ĭata Factory is a scalable ETL solution consisting of such components as pipelines, activities, datasets, and triggers. This service manages all needed steps before using prepared clean data in your business needs. Simply put, the ETL tool takes data from different sources, transforms it into meaningful information, and loads it to the destinations, such as data lakes, data warehouses, etc. What is a Data Factory?Īzure Data Factory is the ETL (extract, transform, and load) tool. In this article, you will learn more about Data Factory and its benefits and best practices you should follow to succeed. With the help of this service, it gets possible to orchestrate data processes, thus later analyze the data and get insights. This is the point when the Azure Data Factory kicks in. So, modern enterprises need to embrace effective tools, technologies, and new approaches to succeed. Investing in advanced technologies and services allows you to extract more value from data.

As more and more data becomes available, it gets difficult to manage it.